Have you ever used a new program or system and found it to be obnoxiously buggy, but then after a while you didn’t notice the bugs anymore? If so, then congratulations: you have been trained by the computer to avoid some of its problems. For example, I used to have a laptop that would lock up to the point where the battery needed to be removed when I scrolled down a web page for too long (I’m guessing the video driver’s logic for handling a full command queue was defective). Messing with the driver version did not solve the problem and I soon learned to take little breaks when scrolling down a long web page. To this day I occasionally feel a twinge of guilt or fear when rapidly scrolling a web page.

Have you ever used a new program or system and found it to be obnoxiously buggy, but then after a while you didn’t notice the bugs anymore? If so, then congratulations: you have been trained by the computer to avoid some of its problems. For example, I used to have a laptop that would lock up to the point where the battery needed to be removed when I scrolled down a web page for too long (I’m guessing the video driver’s logic for handling a full command queue was defective). Messing with the driver version did not solve the problem and I soon learned to take little breaks when scrolling down a long web page. To this day I occasionally feel a twinge of guilt or fear when rapidly scrolling a web page.

The extent to which we technical people have become conditioned by computers became apparent to me when one of my kids, probably three years old at the time, sat down at a Windows machine and within minutes rendered the GUI unresponsive. Even after watching which keys he pressed, I was unable to reproduce this behavior, at least partially because decades of training in how to use a computer have made it very hard for me to use one in such an inappropriate fashion. By now, this child (at 8 years old) has been brought into the fold: like millions of other people he can use a Windows machine for hours at a time without killing it.

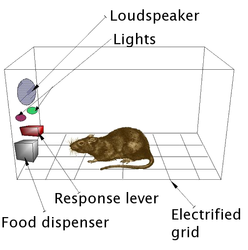

Operant conditioning describes the way that humans (and of course other organisms) adapt their behavior in response to the consequences resulting from that behavior. I drink beer at least partially because this has made me feel good, and I avoiding drinking 16 beers at least partially because that has made me feel bad. Conditioning is a powerful guiding force on our actions and it can happen without our being aware of it. One of my favorite stories is where a psychology class trained the professor to lecture from the corner by paying attention when he stood in one part of the room and looking elsewhere when he did not. (This may or may not be only an urban legend, but seems plausible even so.) Surely our lives are filled with little examples of this kind of unconscious guidance from consequences.

How have software bugs trained us? The core lesson that most of us have learned is to stay in the well-tested regime and stay out of corner cases. Specifically, we will:

- periodically restart operating systems and applications to avoid software aging effects,

- avoid interrupting the computer when it is working (especially when it is installing or updating programs) since early-exit code is pretty much always wrong,

- do things more slowly when the computer appears overloaded—in contrast, computer novices often make overload worse by clicking on things more and more times,

- avoid too much multitasking,

- avoid esoteric configuration options,

- avoid relying on implicit operations, such as the fact that MS Word is supposed to ask us if we want to save a document on quit if unsaved changes exist.

I have a hunch that one of the reasons people mistrust Windows is that these tactics are more necessary there. For example, I never let my wife’s Windows 7 machine go for more than about two weeks without restarting it, whereas I reboot my Linux box only every few months. One time I had a job doing software development on Windows 3.1 and my computer generally had to be rebooted at least twice a day if it was to continue working. Of the half-dozen Windows laptops that I’ve owned, none of them could reliably suspend/resume ten times without being rebooted. I didn’t start this post intending to pick on Microsoft, but their systems have been involved with all of my most brutal conditioning sessions.

Boris Beizer, in his entertaining but long-forgotten book The Frozen Keyboard, tells this story:

My wife’s had no computer training. She had a big writing chore to do and a word-processor was the tool of choice. The package was good, but like most, it had bugs. We used the same hardware and software (she for her notes and I for my books) over a period of several months. The program would occasionally crash for her, but not for me. I couldn’t understand it. My typing is faster than hers. I’m more abusive of the equipment than she. And I used the equipment for about ten hours for each of hers. By any measure, I should have had the problems far more often. Yet, something she did triggered bugs which I couldn’t trigger by trying. How do we explain this mystery? What do we learn from it?

The answer came only after I spent hours watching her use of the system and comparing it to mine. She didn’t know which operations were difficult for the software and consequently her pattern of usage and keystrokes did not avoid potentially troublesome areas. I did understand and I unconsciously avoided the trouble spots. I wasn’t testing that software, so I had no stake in making it fail—I just wanted to get my work done with the least trouble. Programmers are notoriously poor at finding their own bugs—especially subtle bugs—partially because of this immunity. Finding bugs in your own work is a form of self-immolation. We can extend this concept to explain why it is that some thoroughly tested software gets into the field and only then displays a host of bugs never before seen: the programmers achieve immunity to the bugs by subconsciously avoiding the trouble spots while testing.

Beizer’s observations lead me to the first of three reasons why I wrote this piece, which is that I think it’s useful for people who are interested in software testing to know that you can generate interesting test cases by inverting the actions we have been conditioned to take. For example, we can run the OS or application for a very long time, we can interrupt the computer while it is installing or updating something, and we can attempt to overload the computer when its response time is suffering. It is perhaps instructive that Beizer is an expert on software testing, despite also being a successful anti-tester, as described in his anecdote.

The second reason I wrote this piece is that I think operant conditioning provides a partial explanation for the apparent paradox where many people believe that most software works pretty well most of the time, while others believe that software is basically crap. People in the latter camp, I believe, are somehow able to resist or discard their conditioning in order to use software in a more unbiased way. Or maybe they’re just slow learners. Either way, those people would make amazing members of a software testing team.

The second reason I wrote this piece is that I think operant conditioning provides a partial explanation for the apparent paradox where many people believe that most software works pretty well most of the time, while others believe that software is basically crap. People in the latter camp, I believe, are somehow able to resist or discard their conditioning in order to use software in a more unbiased way. Or maybe they’re just slow learners. Either way, those people would make amazing members of a software testing team.

Finally, I think that operant conditioning by software bugs is perhaps worthy of some actual research, as opposed to my idle observations here. An HCI researcher could examine these effects by seeding a program with bugs and observing the resulting usage patterns. Another nice experiment would be to provide random negative reinforcement by injecting failures at different rates for different users and observing the resulting behaviors. Anyone who has been in a tech support role has seen the bizarre cargo cult rituals that result from unpredictable failures.

In summary, computers are Skinner Boxes and we’re the lab rats—sometimes we get a little food, other times we get a shock.

Acknowledgment: This piece benefited from discussions with my mother, who previously worked as a software tester.

7 responses to “Operant Conditioning by Software Bugs”

I witnessed someone who, on a regular basis, manually terminated all the apps on their iPhone. Something that as a developer, I know to be generally useless as the OS automatically manages it. I think this person’s behavior was learned from other systems, probably Blackberry, maybe even Windows.

Also: I have read reviewers of Windows 8 complaining that it was hard to figure out how to “close” running apps. When of course it’s the same idea – the OS takes care of it. But a lot of us still think it is our job.

Hi CL, I believe that I have observed the camera app on my wife’s iPhone draining the battery within a couple of hours even when in the background. From this we learned to terminate it manually. I felt kind of stupid about this but it did seem to make a difference!

In an example of synchronicity, you reminded me of this blog post: http://prog21.dadgum.com/161.html

The operant conditioning likely forces behaviours that match the developer’s initial assumptions; explicit lists of those assumptions could ease the process of understanding a software system.

Another factor for the “software is basically crap” people could be the mean time between picking up new software. People who review stuff and or aggressively run auto-updates might provoke this.

Look, using cut and paste on linux or using almost any word processor and trying to do serious formatting (and I indict latex here too) suggests that a large chunk of software is… highly suboptimal, to put it kindly.

I can’t seem to find the link, but I believe there was a project to do distributed, crowdsourced crash-maps. So you might use an app, and get it to crash, and then send in a report, and the next person to use the app, when they did the action that you did, would get a warning.

Unrelatedly – It might be interesting to look at software not as language (digital, perfectly right or wrong), but as a plastic substance that is shaped by pressure applied by programmers forcing it through a rigid requirements/QA policy. For example, if your requirements/QA policy starts up the application and then runs it until a crash, then the early application startup parts of the software get more exercise than the “after a week” parts of the software – so software aging can be explained by the usual software reliability growth curve. If instead you had a requirements/QA policy that started the software “in the middle”, perhaps from an arbitrary state of the machine, then (assuming you actually got any software written at all) the result might be mostly immune to software aging. Some static analyses look like starting to test from “all possible middle states”.

To some extent, I think this is one of the reasons for advocating TDD; because the distribution of exercise that unit tests put on the software is “more even”, shifting requirements/QA policy to focus on unit tests results in fewer dark, dank, dusty, un-exercised, early-on-the-software-reliability-growth-curve corners.